This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Overview

- 2: Release specific infos

- 3: Getting Started

- 4: Tutorials

- 4.1: Plan overall ClusterCockpit architecture

- 4.2: ClusterCockpit installation manual

- 4.3: Decide on metric list

- 4.4: Deployment

- 4.5: Setup of cc-metric-store

- 4.6: Setup of cc-metric-collector

- 4.7: Setup of cc-backend

- 5: How-to Guides

- 5.1: Configure retention policies

- 5.2: How to set up hierarchical metric collection

- 5.3: Database migrations

- 5.4: Job archive migrations

- 5.5:

- 5.6: Hands-On Demo

- 5.7: How to add a MOD notification banner

- 5.8: How to create a `cluster.json` file

- 5.9: How to customize cc-backend

- 5.10: How to deploy and update cc-backend

- 5.11: How to enable and configure auto-tagging

- 5.12: How to generate JWT tokens

- 5.13: How to plan and configure resampling

- 5.14: How to regenerate the Swagger UI documentation

- 5.15: How to setup a systemd service

- 5.16: How to use the REST API Endpoints

- 5.17: How to use the Swagger UI documentation

- 6: Explanation

- 6.1: Authentication

- 6.2: Configuration Management

- 6.3: InfluxDB Line Protocol

- 6.4: JSON Web Token

- 6.5: Metric Store

- 6.6: NATS messaging

- 6.7: Roles

- 7: Reference

- 7.1: cc-backend

- 7.1.1: Command Line

- 7.1.2: Configuration

- 7.1.3: Environment

- 7.1.4: REST API

- 7.1.5: Authentication Handbook

- 7.1.6: Job Archive Handbook

- 7.1.7: Schemas

- 7.1.7.1: Application Config Schema

- 7.1.7.2: Cluster Schema

- 7.1.7.3: Job Data Schema

- 7.1.7.4: Job Statistics Schema

- 7.1.7.5: Unit Schema

- 7.1.7.6: Job Archive Metadata Schema

- 7.1.7.7: Job Archive Metrics Data Schema

- 7.1.8: Tools

- 7.1.8.1: archive-manager

- 7.1.8.2: archive-migration

- 7.1.8.3: convert-pem-pubkey

- 7.1.8.4: gen-keypair

- 7.1.8.5: grepCCLog.pl

- 7.1.8.6: Metric Generator Script

- 7.2: cc-metric-store

- 7.2.1: Command Line

- 7.2.2: Configuration

- 7.2.3: Metric Store REST API

- 7.3: cc-metric-collector

- 7.3.1: Configuration

- 7.3.2: Installation

- 7.3.3: Usage

- 7.3.4: Metric Router

- 7.3.5: Collectors

- 7.4: cc-slurm-adapter

- 7.4.1: Installation

- 7.4.2: cc-slurm-adapter Configuration

- 7.4.3: Daemon Setup

- 7.4.4: Prolog/Epilog Hooks

- 7.4.5: Usage

- 7.4.6: Troubleshooting

- 7.4.7: Architecture

- 7.4.8: API Integration

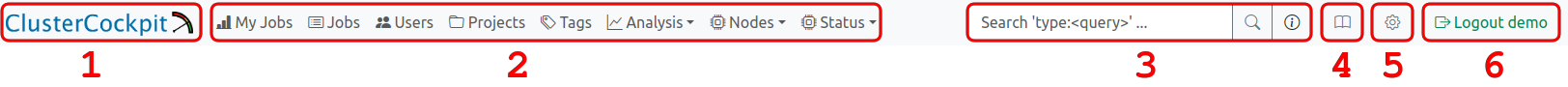

- 8: Web Interface

- 9: Contribution Guidelines

1 - Overview

What does it do?

ClusterCockpit is a framework for job-specific performance and power monitoring on distributed HPC clusters. It is designed with a strong focus on ease of installation and maintenance, high security, and intuitive usability.

ClusterCockpit provides a modern web interface offering tailored views for different user groups.

For HPC users

- A comprehensive overview of running and completed batch jobs

- Access to a wide range of job-level metrics, including hardware performance counters and power data

- Flexible sorting, filtering, and tagging of jobs

- Support for identifying performance bottlenecks and inefficient resource usage

For support staff

- Unified access to job data across multiple clusters

- Advanced filtering and sorting by job, user, or system

- Customizable statistical analyses with aggregated job and user data

- A cluster status dashboard for quick detection of system-wide issues

For administrators

- Single-file deployment of the ClusterCockpit web backend with Systemd integration

- Node agents available as RPM and DEB packages

- Multiple authentication options, including local accounts, LDAP, OpenID Connect, and JWT

- A comprehensive REST/NATS API for integration with batch schedulers and existing monitoring infrastructures

ClusterCockpit is used in production at several HPC computing centers, demonstrating its maturity and suitability for real-world HPC operations (List of users.)

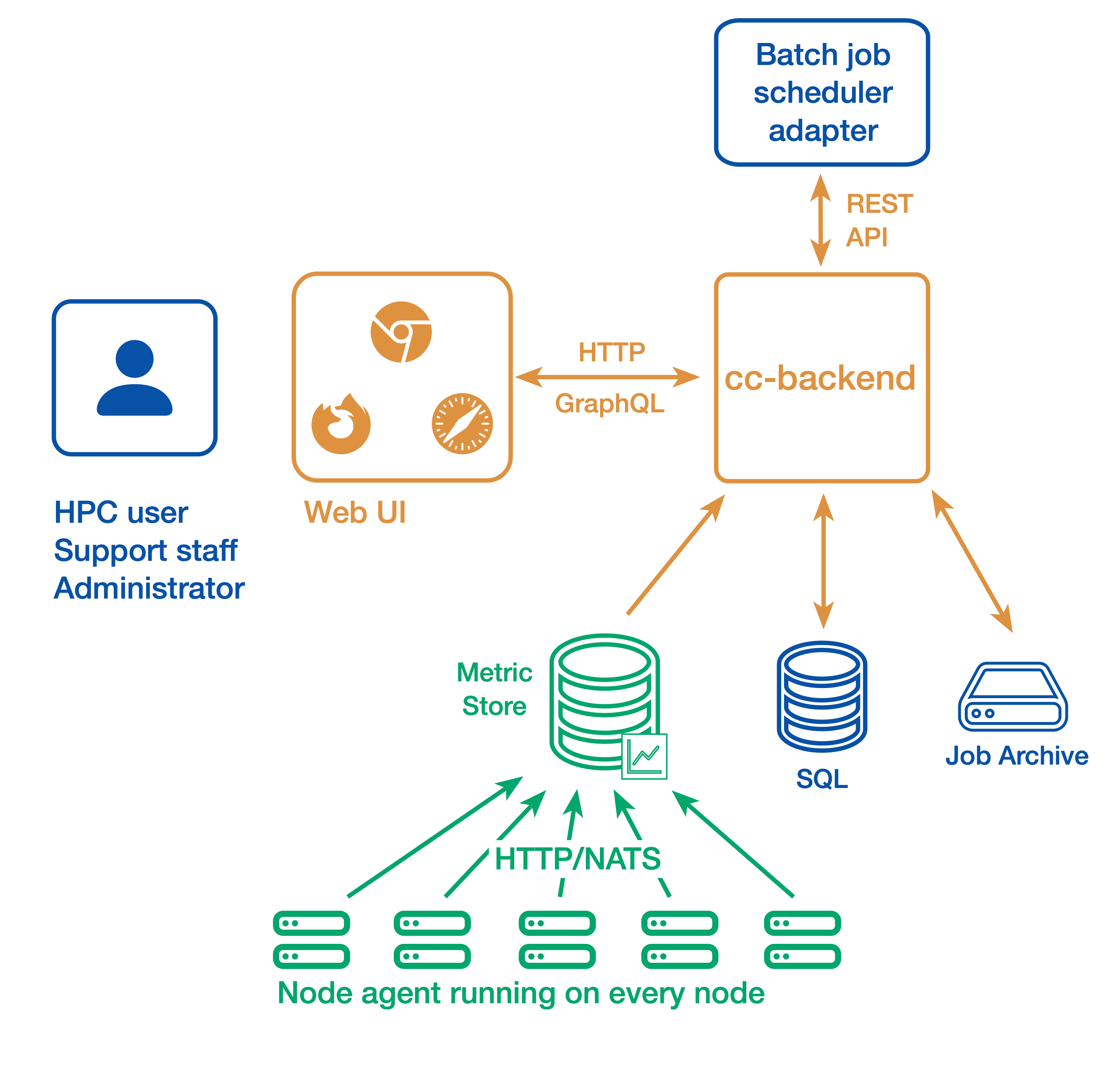

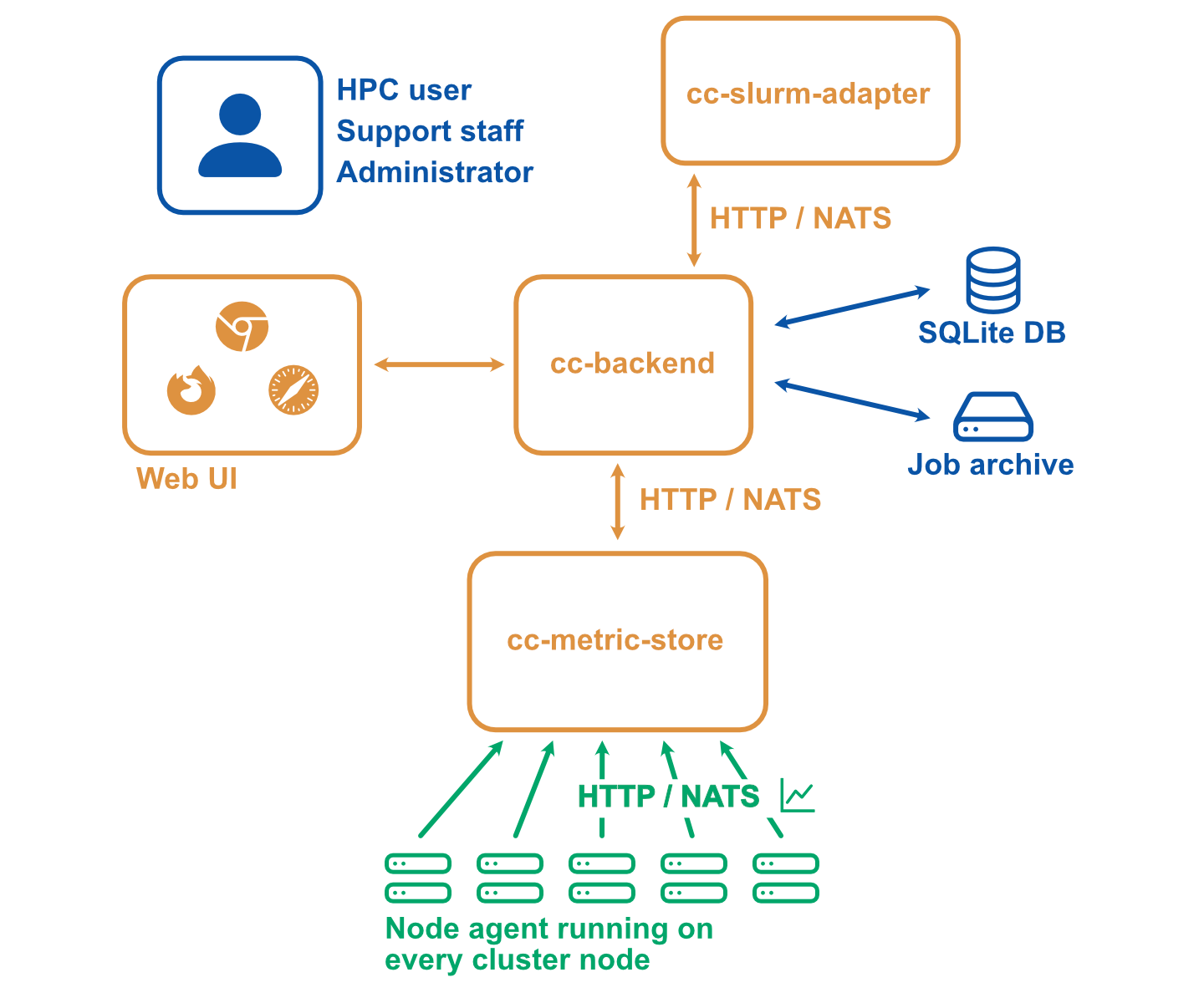

How does it work?

Simple setup

In a simple setup ClusterCockpit consists of the following components:

- The web user interface and API backend: cc-backend

- The node-level metric collection agent (one per compute node): cc-metric-collector

- The Slurm scheduler adapter: cc-slurm-adapter

Node-level metrics are collected continuously by the metric collector and sent to the backend at fixed intervals. Job metadata is provided by one Slurm adapter per Slurm Controller or by a custom adapter for other batch job schedulers and is transmitted to cc-backend via HTTP or NATS.

Job metadata is stored in an internal SQLite database. For running jobs, cc-backend queries an internal metrics store to retrieve all required time-series data. Once a job has finished, its complete dataset—including metadata and metrics—is persisted to a JSON based job archive.

cc-backend supports multiple archive backends:

- A file-based archive

- A single-file SQLite-based archive

- An S3-compatible object store

Finished jobs are loaded on demand from the job archive. The internal metrics store uses a memory pool, retaining time-series data only as long as used by running jobs. This design enables data retention policies and allows ClusterCockpit to operate with minimal maintenance overhead.

Alternative setup

A more complicated setup with multiple clusters or stricter requirements with regard to security may look as follows:

- The web user interface and API backend (There is always only one backend instance): cc-backend

- The node-level metric collection agent (one per compute node): cc-metric-collector

- The Slurm scheduler adapter (one per Slurm controller): cc-slurm-adapter

- Optional: External cc-metric-store. Can be one for all clusters, or any other distribution up to one per subcluster. You can also mix to use the internal metric store for some clusters and one or more external metric stores for others.

The rest of the architecture is the same as above.

Where to go next?

- Getting Started: Set up and explore a local ClusterCockpit demo

- Installation manual: Plan, configure, and deploy a production ClusterCockpit installation

- User guide: Learn how to use the ClusterCockpit web interface

Documentation Structure

- Tutorials: Step-by-step guides for configuring and deploying ClusterCockpit

- How-to Guides: Practical solutions to common tasks and problems

- Explanation: Background information, concepts, and terminology used in ClusterCockpit

- Reference: Detailed technical reference documentation

2 - Release specific infos

cc-backend version 1.5.0

Supports job archive version 3 and database version 10.

Breaking changes

Configuration changes

- JSON attribute naming: All JSON configuration attributes now use

kebab-casestyle consistently (e.g.,api-allowed-ipsinstead ofapiAllowedIPs). Update yourconfig.jsonaccordingly. - Removed

disable-archiveoption: This obsolete configuration option has been removed. - Removed

clustersconfig section: The separate clusters configuration section has been removed. Cluster information is now derived from the job archive. apiAllowedIPsis now optional: If not specified, defaults to not restricted.

Architecture changes

- MySQL/MariaDB support removed: Only SQLite is now supported as the database backend.

- Web framework replaced: Migrated from

gorilla/muxtochias the HTTP router. A proper 404 handler is now in place. - MetricStore moved: The

metricstorepackage has been moved frominternal/topkg/as it is now part of the public API. minRunningForfilter removed: This undocumented filter has been removed from the API and frontend. A new Short jobs quick-filter button replaces it.

Major new features

- NATS API Integration: Subscribe to real-time job start/stop events and node

state changes via NATS. NATS subjects are configurable via

api-subjects. - Public Dashboard: New public-facing dashboard route at

/publicfor external users. - Enhanced Node Management: New node state tracking table with timestamp tracking, filtering, and configurable retention/archiving to Parquet format.

- Health Monitoring: New dedicated Health tab in the status details view showing per-node metric health across the cluster. Supports querying external cc-metric-store (CCMS) health status via the API.

- Web-based Log Viewer: Inspect backend log output directly from the browser via the admin interface without requiring shell access.

- Job Tagging System: Automatic detection of applications (MATLAB, GROMACS, etc.) and pathological job classification. Taggers can be triggered on-demand from the admin web interface.

- Parquet Archive Format: New Parquet file format for job archiving with columnar storage and efficient zstd compression. Full S3 and SQLite blob backends are also supported.

- Unified Archive Retention: Job archive retention supports both JSON and Parquet as target formats under a single consistent policy configuration.

What you need to do

- Update

config.json: Rename all configuration attributes tokebab-case(e.g.,apiAllowedIPs→api-allowed-ips). See the configuration reference for the full list of options. - Review cluster configuration: Cluster information is now derived from the

job archive. Remove the

clusterssection fromconfig.jsonand ensure yourcluster.jsonfiles in the job archive are up to date. - Migrate your job database to version 10 (see Database migration).

- Migrate your job archive to version 3 (see Job Archive migration).

- If using NATS, configure the new

natsandapi-subjectssections. - If using archive retention, configure the

target-formatoption to choose betweenjson(default) andparquetoutput formats. - Consider enabling nodestate retention if you track node states over time.

Configuration changes

GitHub Repository with complete configuration examples. All configuration options are checked against a JSON schema.

New configuration options

{

"main": {

"enable-job-taggers": true,

"resampling": {

"minimum-points": 600,

"trigger": 180,

"resolutions": [240, 60]

},

"api-subjects": {

"subject-job-event": "cc.job.event",

"subject-node-state": "cc.node.state"

}

},

"nats": {

"address": "nats://0.0.0.0:4222",

"username": "root",

"password": "root"

},

"cron": {

"commit-job-worker": "1m",

"duration-worker": "5m",

"footprint-worker": "10m"

},

"metric-store": {

"cleanup": {

"mode": "archive",

"interval": "48h",

"directory": "./var/archive"

}

},

"archive": {

"retention": {

"policy": "delete",

"age": "6months",

"target-format": "parquet"

}

},

"nodestate": {

"retention": {

"policy": "archive",

"age": "30d",

"archive-path": "./var/nodestate-archive"

}

}

}

Transfer cc-metric-store checkpoints

We are currently offering the option to use the metric-store integrated in

cc-backend. Meaning both cc-backend and cc-metric-store share same configuration

as well as they run on the same server. The checkpoints in an internal

cc-metric-store reside in the var directory of cc-backend. If you choose to use

cc-metric-store-internal as your metric store, then you can do the following to

bring your old checkpoints from your previous external cc-metric-store

installation:

Look out for “checkpoints” key in your CCMS and CCB config.json.

"checkpoints": {

"directory": "./var/checkpoints",

"restore": "48h"

},

Either you can move the checkpoints manually or you can use this script for moving the checkpoints.

#!/bin/bash

# The path to your "directory" configured in CCMS and CCB config.json

# replace the path as shown with the dummy paths.

CCMS_CHECKPOINTS_DIR="/home/dummy/cc-metric-store/var/checkpoints"

CCB_CHECKPOINTS_DIR="/home/dummy/cc-backend/var/checkpoints"

# Check if the source directory actually exists

if [ -d "$CCMS_DIR" ]; then

if [ ! -d "$CCB_CHECKPOINTS_DIR" ]; then

mkdir "$CCB_CHECKPOINTS_DIR"

fi

mv -f $CCMS_CHECKPOINTS_DIR $CCB_CHECKPOINTS_DIR

echo "Success: 'checkpoints' moved from $CCMS_CHECKPOINTS_DIR to $CCB_DIR"

else

echo "Error: Directory '$CCMS_CHECKPOINTS_DIR' does not exist."

fi

Known issues

- The new dynamic memory management is not bullet proof yet across restarts. We will fix that in a subsequent patch release.

- Currently energy footprint metrics of type energy are ignored for calculating total energy.

- With energy footprint metrics of type power the unit is ignored and it is assumed the metric has the unit Watt.

3 - Getting Started

The central component of ClusterCockpit is the web- and api backend

cc-backend. We provide a demo setup that allows you to get an impression of

the web interface. If you just want to try the demo and you have a Linux OS you

can do so using the cc-backend release binary.

You find detailed instructions on how to setup the demo with the release binary here

If you have a different OS or want to build cc-backend yourself follow the

instructions below.

Prerequisites

To build cc-backend you need:

- A go compiler, version 1.25 or newer. Most recent os environments should have a package with a recent enough version. On MacOS we recommend to use Homebrew to install on.

- A node.js environment including the

npmpackage manager. - A git revision control client.

- For the demo shell script you need

wgetto download the example job archive

Try it out

All ClusterCockpit components are available within the GitHub ClusterCockpit project.

Clone cc-backend and change directory into the repository:

git clone https://github.com/ClusterCockpit/cc-backend.git && cd cc-backend

Note

The startDemo script will download a tar file with 38MB (223MB on disk)!Execute the demo start script:

./startDemo.sh

What follows is output from building cc-backend and downloading the job-archive

HTTP server listening at 127.0.0.1:8080...

Open a web browser and access http://localhost:8080.

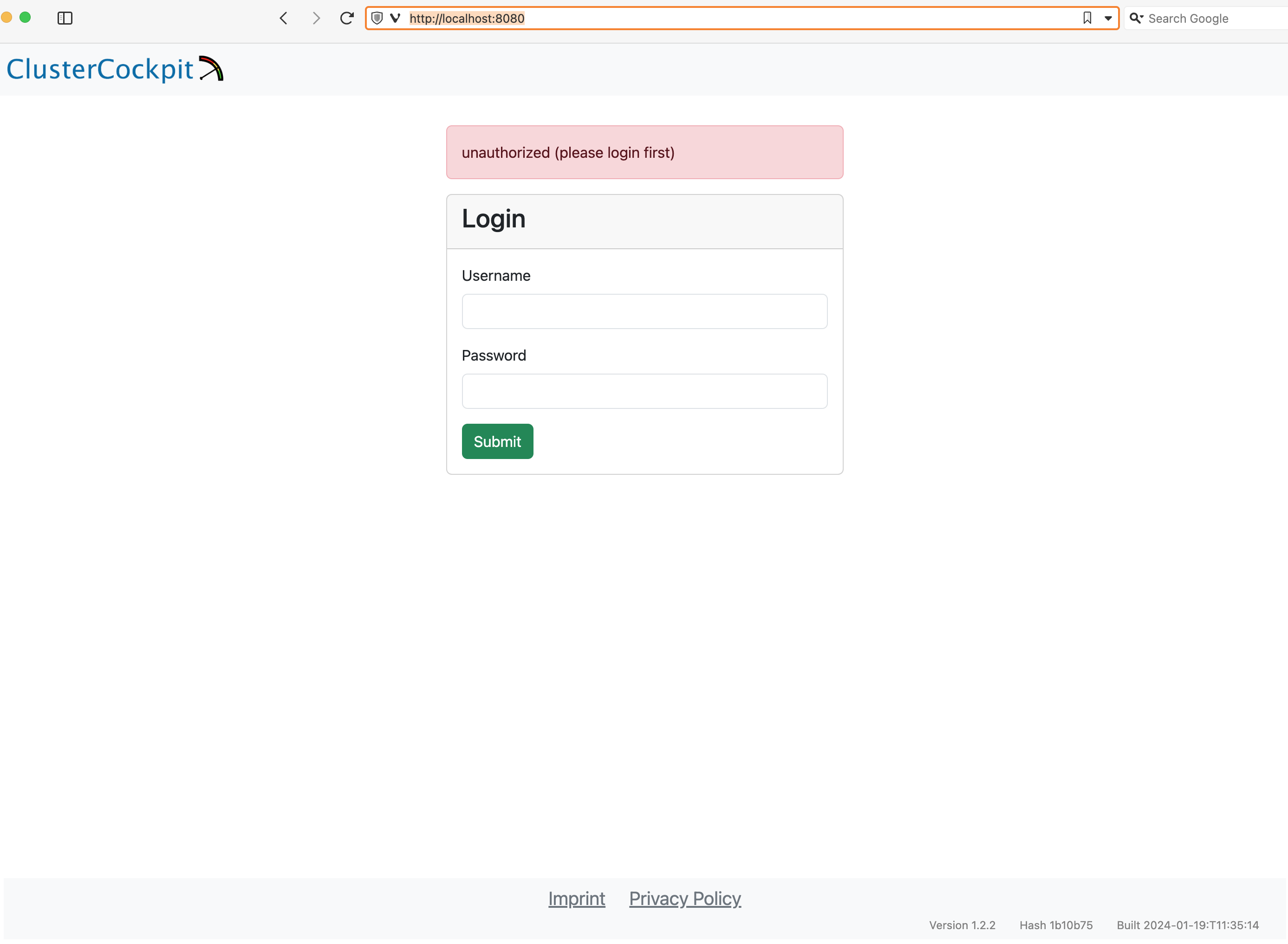

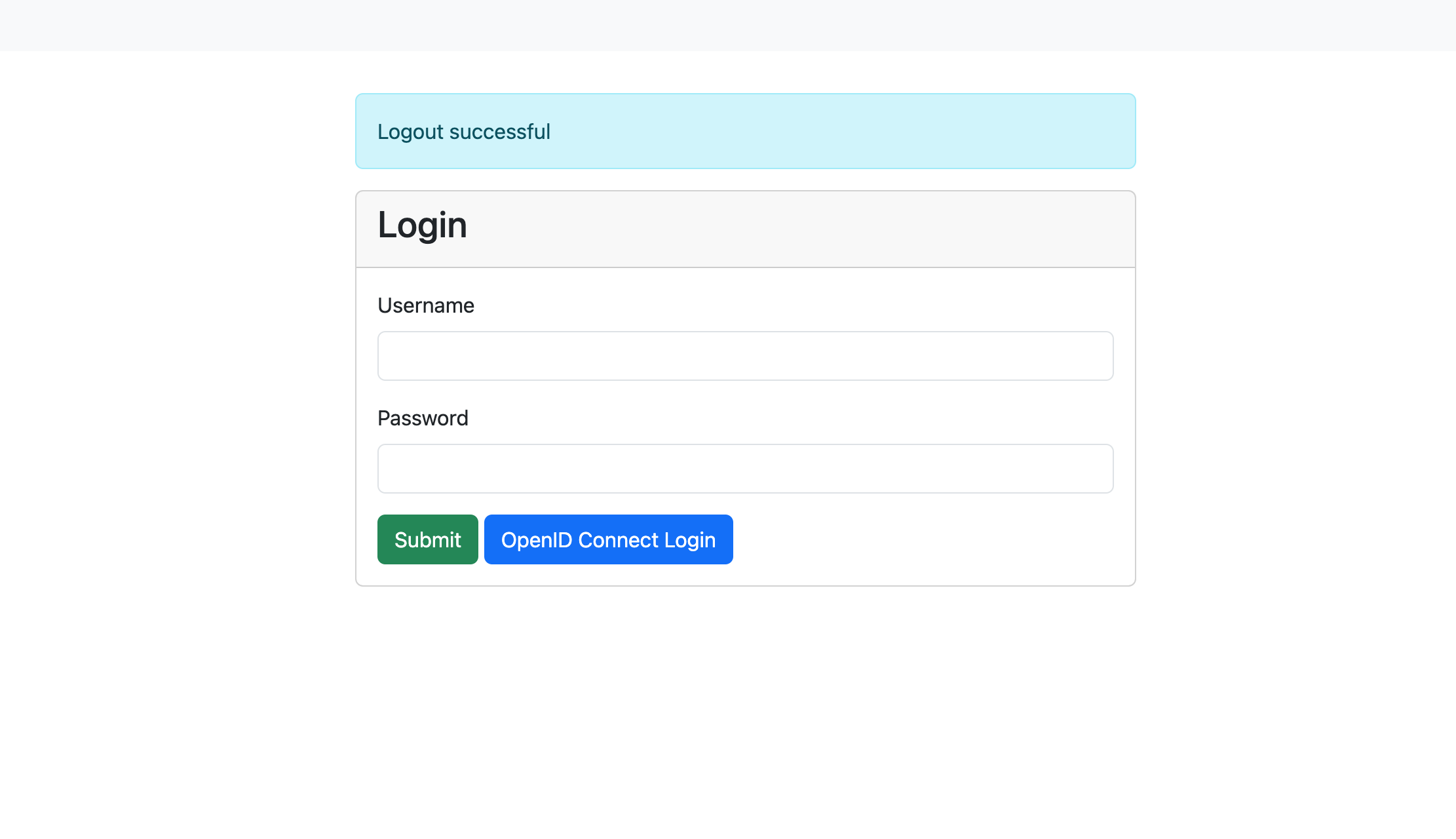

You should see the ClusterCockpit login page:

Enter demo for the Username and demo for the Password and press the Submit

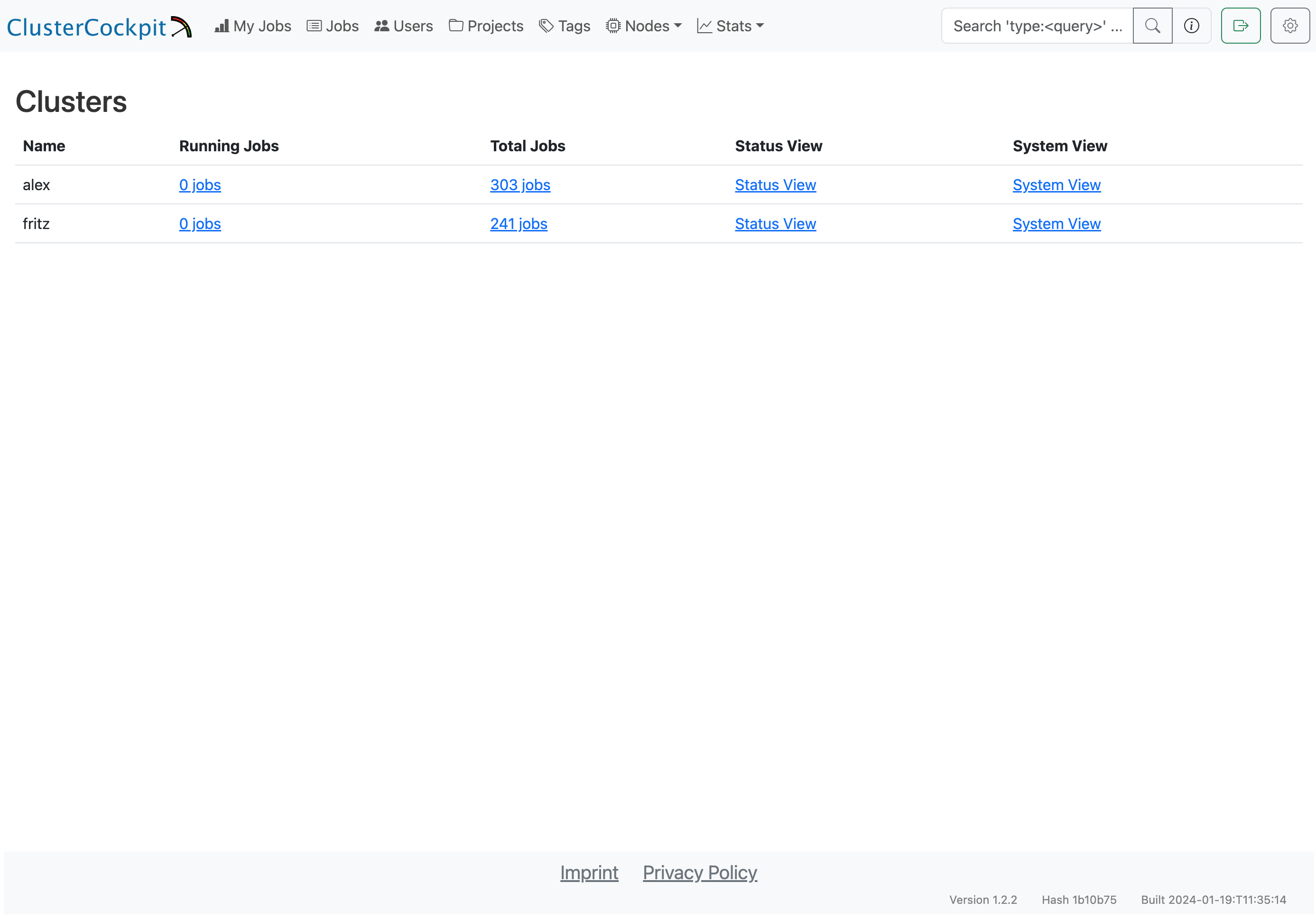

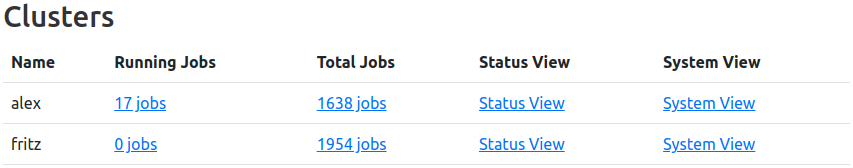

button. After that the ClusterCockpit index page should be displayed:

The demo user has the admin role and therefore can see all views.

Note

Because the demo only loads data from the job archive some views as the status and systems view do not work!For details about the features of the web interface have a look at the user guide.

Installation

We provide an installation manual to guide you how to plan and configure a production ClusterCockpit deployment. If you are a computing center and face problems do not hesitate to ask for help in our communication channels.

3.1 - Demo with release binary

The demo setup with the release binary only works with a Linux system running on a x86-64 processor.

Grab the release binary at GitHub. The following description assumes you perform all tasks from your home folder. Extract the tar archive:

tar xzf cc-backend_Linux_x86_64.tar.gz

Create an empty folder and copy the binary cc-backend from the extracted archive folder to this folder:

mkdir ./demo

cp cc-backend ./demo

Change to the demo folder and run the following command to setup the required var

directory, initialize the sqlite database, config.json and .env files:

./cc-backend -init

Open config.json in an editor of your choice to edit the existing clusters

name and add a second cluster. Name the clusters fritz and alex. The file

should look as below afterwards:

| |

Download the demo job archive:

wget https://hpc-mover.rrze.uni-erlangen.de/HPC-Data/0x7b58aefb/eig7ahyo6fo2bais0ephuf2aitohv1ai/job-archive-demo.tar

Extract the job archive:

tar xf job-archive-demo.tar

Initialize the database using the data from the job archive and create the demo user:

./cc-backend -init-db -add-user demo:admin:demo -loglevel info

Start the web server:

./cc-backend -server -dev -loglevel info

Open a web browser and access http://localhost:8080.

You should see the ClusterCockpit login page:

Enter demo for the Username and demo for the Password and press the Submit button. After that the ClusterCockpit index page should be displayed:

The demo user has the admin role and therefore can see all views.

Note

Because the demo only loads data from the job archive some views as the status and systems view do not work!For details about the features of the web interface have a look at the user guide.

4 - Tutorials

4.1 - Plan overall ClusterCockpit architecture

Introduction

When deploying ClusterCockpit in production, two key architectural decisions need to be made:

- Transport mechanism: How metrics flow from collectors to the metric store (REST API vs NATS)

- Metric store deployment: Where the metric store runs (internal to cc-backend vs external standalone)

This guide helps you understand the trade-offs to make informed decisions based on your cluster size, administrative capabilities, and requirements. You may also use a mix of above options.

Transport: REST API vs NATS

The cc-metric-collector can send metrics to cc-metric-store using either direct HTTP REST API calls or via NATS messaging.

REST API Transport

With REST transport, each collector node sends HTTP POST requests directly to the metric store endpoint.

┌─────────────┐ HTTP POST ┌──────────────────┐

│ Collector │ ─────────────────► │ cc-metric-store │

│ (Node 1) │ │ │

└─────────────┘ │ │

┌─────────────┐ HTTP POST │ │

│ Collector │ ─────────────────► │ │

│ (Node 2) │ └──────────────────┘

└─────────────┘

...

Advantages:

- Simple setup with no additional infrastructure

- Direct point-to-point communication

- Easy to debug and monitor

- Works well for smaller clusters (< 500 nodes)

Disadvantages:

- Each collector needs direct network access to metric store

- No buffering: if metric store is unavailable, metrics are lost

- Scales linearly with node count (many concurrent connections)

- Higher load on metric store during burst scenarios

NATS Transport

With NATS, collectors publish metrics to a NATS server, and the metric store subscribes to receive them.

┌─────────────┐ ┌─────────────┐

│ Collector │ ──► publish ──► │ │

│ (Node 1) │ │ │

└─────────────┘ │ NATS │ subscribe ┌──────────────────┐

┌─────────────┐ │ Server │ ◄───────────────► │ cc-metric-store │

│ Collector │ ──► publish ──► │ │ └──────────────────┘

│ (Node 2) │ │ │

└─────────────┘ └─────────────┘

...

Advantages:

- Decoupled architecture: collectors and metric store are independent

- Built-in buffering and message persistence

- Better scalability for large clusters (1000+ nodes)

- Supports multiple subscribers (e.g., external metric store for redundancy)

- Collectors continue working even if metric store is temporarily down

- Lower connection overhead (single connection per collector to NATS)

- Integrated key management via NKeys (Ed25519-based authentication):

- No need to generate and distribute JWT tokens to each collector

- Centralized credential management in NATS server configuration

- Support for accounts with fine-grained publish/subscribe permissions

- Credential revocation without redeploying collectors

- Simpler key rotation compared to JWT token redistribution

Disadvantages:

- Additional infrastructure component to deploy and maintain

- More complex initial setup and configuration

- Additional point of failure (NATS server)

- Requires NATS expertise for troubleshooting

Recommendation

| Cluster Size | Recommended Transport |

|---|---|

| < 200 nodes | REST API |

| 200-500 nodes | Either (based on preference) |

| > 500 nodes | NATS |

For large clusters or environments requiring high availability, NATS provides better resilience and scalability. For smaller deployments or when minimizing complexity is important, REST API is sufficient.

Metric Store: Internal vs External

The cc-metric-store storage engine can run either integrated within cc-backend (internal) or as a separate standalone service (external).

Internal Metric Store

The metric store runs as part of the cc-backend process, sharing the same configuration and lifecycle.

┌────────────────────────────────────────┐

│ cc-backend │

│ ┌──────────────┐ ┌────────────────┐ │

│ │ Web UI & │ │ metric-store │ │

│ │ GraphQL │ │ (internal) │ │

│ └──────────────┘ └────────────────┘ │

└────────────────────────────────────────┘

│ ▲

▼ │

┌─────────┐ ┌─────────┐

│ Browser │ │Collector│

└─────────┘ └─────────┘

Advantages:

- Single process to deploy and manage

- Unified configuration

- Simplified networking (metrics received on same endpoint)

- Lower resource overhead

- Easier initial setup

Disadvantages:

- Metric store restart requires cc-backend restart and the other way around

- A cc-backend restart can take more then a minute since the metric store checkpoints have to be loaded on startup

- Resource contention between web serving and metric ingestion

- No horizontal scaling of metric ingestion

- Single point of failure for entire system

External Metric Store

The metric store runs as a separate process, communicating with cc-backend via its REST API.

┌──────────────────┐ ┌──────────────────┐

│ cc-backend │ ◄─────► │ cc-metric-store │

│ (Web UI/API) │ query │ (external) │

└──────────────────┘ └──────────────────┘

│ ▲

▼ │

┌─────────┐ ┌─────────┐

│ Browser │ │Collector│

└─────────┘ └─────────┘

Advantages:

- Independent scaling and resource allocation

- Can restart metric store without affecting web interface and the other way around

- Enables redundancy with multiple metric store instances

- Better isolation for security and resource management

- Can run on dedicated hardware optimized for in-memory workloads

Disadvantages:

- Two services to deploy and manage

- Separate configuration files

- Additional network communication between components

- More complex setup and monitoring

Recommendation

| Scenario | Recommended Deployment |

|---|---|

| Development/Testing | Internal |

| Small production (< 200 nodes) | Internal |

| Medium production (200-1000 nodes) | Either |

| Large production (> 1000 nodes) | External |

| Resource-constrained head node | External (on dedicated host) |

Security Considerations

Network Exposure

| Component | REST Transport | NATS Transport |

|---|---|---|

| Metric Store | Exposed to all collector nodes | Only exposed to NATS server |

| NATS Server | N/A | Exposed to all collectors and metric stores |

| cc-backend | Exposed to users | Exposed to users |

With NATS, the metric store can be isolated from the cluster network, reducing attack surface. The NATS server becomes the single point of ingress for metrics. Another option to isolate the web backend from the cluster network is to setup cc-metric-collector proxies.

Authentication

- REST API: Uses JWT tokens (Ed25519 signed). Each collector needs a valid token configured and distributed to it.

- NATS: Supports multiple authentication methods:

- Username/password (simple, suitable for smaller deployments)

- NKeys (Ed25519 key pairs managed centrally in NATS server)

- Credential files (

.creds) for decentralized authentication - Accounts for multi-tenancy with isolated namespaces

NKeys Advantage: With NATS NKeys, authentication keys are managed in the NATS server configuration rather than distributed to each collector. This simplifies credential management significantly:

- Add/remove collectors by editing NATS server config

- Revoke access instantly without touching collector nodes

- No JWT token expiration to manage

- Keys can be scoped to specific subjects (publish-only for collectors)

For both transports, ensure:

- Keys are properly generated and securely stored

- TLS is enabled for production deployments

- Network segmentation isolates monitoring traffic

Privilege Separation

Both cc-backend and the external cc-metric-store support dropping

privileges after binding to privileged ports (via user and group

configuration). This limits the impact of potential vulnerabilities.

Performance Considerations

Memory Usage

The metric store keeps data in memory based on retention-in-memory. Memory

usage scales with:

- Number of nodes

- Number of metrics per node

- Number of hardware scopes (cores, sockets, accelerators)

- Retention duration

- Metric frequency

For a 1000-node cluster with 20 metrics at 60-second intervals and 48-hour retention, expect approximately 10-20 GB of memory usage. For larger setups and many core level metrics this can increase up to 100GB, which must fit into main memory.

CPU Usage

- Internal: Competes with cc-backend web serving

- External: Dedicated resources for metric processing

For clusters with high query load (many users viewing job details), external deployment prevents metric ingestion from impacting user experience.

Disk I/O

Checkpoints are written periodically. For large deployments:

- Use fast storage (SSD) for checkpoint directory

- Consider separate disks for checkpoints and archives

- Monitor disk space for archive growth

- ClusterCockpit supports to store archives on an external S3 compatible object store

Example Configurations

Small Cluster (Internal + REST)

Single cc-backend with internal metric store, collectors using REST:

// cc-backend config

{

"metric-store": {

"retention-in-memory": "48h",

"memory-cap": 100,

"checkpoints": {

"directory": "./var/checkpoints"

}

}

}

Large Cluster (External + NATS)

Separate cc-metric-store with NATS transport:

// cc-metric-store config

{

"main": {

"addr": "0.0.0.0:8080",

"jwt-public-key": "..."

},

"nats": {

"address": "nats://nats-server:4222",

"username": "ccms",

"password": "..."

},

"metric-store": {

"retention-in-memory": "48h",

"memory-cap": 80,

"checkpoints": {

"directory": "/data/checkpoints"

},

"cleanup": {

"mode": "archive",

"directory": "/data/archive"

},

"nats-subscriptions": [

{

"subscribe-to": "hpc-metrics",

"cluster-tag": "mycluster"

}

]

}

}

Decision Checklist

Use this checklist to guide your architecture decision:

- Cluster size: How many nodes need monitoring?

- Availability requirements: Is downtime acceptable?

- Administrative capacity: Can you manage additional services?

- Network topology: Can collectors reach the metric store directly?

- Resource constraints: Is the head node resource-limited?

- Security requirements: Do you need network isolation?

- Growth plans: Will the cluster expand significantly?

For most new deployments, starting with internal metric store and REST transport is recommended. You can migrate to external deployment and/or NATS later as needs evolve.

4.2 - ClusterCockpit installation manual

Introduction

ClusterCockpit requires the following components:

- A node agent running on all compute nodes that measures required metrics and

forwards all data to a time series metrics database. ClusterCockpit provides

its own node agent

cc-metric-collector. This is the recommended setup, but ClusterCockpit can also be integrated with other node agents, e.g.collectd,prometheusortelegraf. In this case you have to use it with the accompanying time series database and ensure the metric data is send or forwarded tocc-backend. - The api and web interface backend

cc-backend. Only one instance ofcc-backendis required. This will provide the HTTP server at the desired monitoring URL for serving the web interface. It also integrates an in-memory metric store. - A SQL database. The only supported option is to use the builtin sqlite database for ClusterCockpit. It is recommended to setup LiteStream as a service which performs a continuous replication of the sqlite database to multiple storage backends.

- (Optional) Metric store: One or more

cc-metric-storeinstances. Advantages for using an external cc-metric-store are:- Independent scaling and resource allocation

- Can restart metric store without affecting web interface and the other way around

- Enables redundancy with multiple metric store instances

- Better isolation for security and resource management

- Can run on dedicated hardware optimized for in-memory workloads

- (Optional) NATS message broker: Apart from REST APIs ClusterCockpit also

supports NATS as a way to connect components. Using NATS brings a number of

advantages:

- More flexible deployment and testing. Instances can have different URLs or IP addresses. Test instances are easy to deploy in parallel without a need to touch the configuration.

- NATS comes with a builtin sophisticated token key management. This also enables to restrict authorization to specific subjects.

- NATS may provide a larger message throughput compared to REST over HTTP.

- Upcoming ClusterCockpit components as the Energy Manager require NATS.

- A batch job scheduler adapter that provides the job meta information to

cc-backend. This is done by using the provided REST or NATS API for starting and stopping jobs. Currently available adapters:- Slurm: Golang based solution

(cc-slurm-adapter) maintained

by NHR@FAU. This is the recommended option in case you use Slurm. All

functionality in

cc-backendis supported. - Slurm: Python based solution (cc-slurm-sync) maintained by PC2 Paderborn

- HTCondor: cc-condor-sync maintained by Saarland University

- Slurm: Golang based solution

(cc-slurm-adapter) maintained

by NHR@FAU. This is the recommended option in case you use Slurm. All

functionality in

Server Hardware

cc-backend is threaded and therefore profits from multiple cores.

Enough memory is required to hold the metric data cache. For most setups 128GB

should be enough. You can set an upper limit for the memory capacity used by the

internal metric in-memory cache. How much memory is required depends apart from

the resource count also on the frequency of the timeseries data. Starting with

cc-backend v1.5.0 you do not need a safety margin anymore for memory

retention.

It is possible to run it in a virtual machine. For best

performance the ./var folder of cc-backend which contains the sqlite

database file and the file based job archive should be located on a fast storage

device, ideally a NVMe SSD. The sqlite database file and the job archive will

grow over time (if you are not removing old jobs using a retention policy).

Our setup covering multiple clusters over 5 years takes 75GB for the sqlite database

and around 1.4TB for the job archive. In case you have very high job counts, we

recommend to use a retention policy to keep the database and the job archive at

a manageable size. In case you archive old jobs the database can be easily

restored using cc-backend.

It is recommended to run cc-backend as a systemd service. Example systemd unit

files are available in the ClusterCockpit component repositories and in the

cc-examples repository.

Planning and initial configuration

We recommended the following order for planning and configuring a ClusterCockpit installation:

- Decide on overall setup: Initially you have to decide on some fundamental design options about how the components communicate with each other and how the data flows from the compute nodes to the backend.

- Setup your metric list: With two exceptions

you are in general free which metrics you want choose. Those exceptions are:

mem_bwfor main memory bandwidth andflops_anyfor flop throughput (double precision flops are upscaled to single precision rates). The metric list is an integral component for the configuration of all ClusterCockpit components. - Planning of deployment

- (Optional) Configure and deploy

cc-metric-store - Configure and deploy

cc-metric-collector - Configure and deploy

cc-backend - Configure and deploy

cc-slurm-adapteror another job scheduler adapter of your choice

You can find complete example production configurations in the cc-examples repository.

Common problems

Up front here is a list with common issues people are facing when installing ClusterCockpit for the first time.

Inconsistent metric names across components

At the moment you need to configure the metric list in every component

separately. In cc-metric-collector the metrics that are send to the

cc-backend are determined by the collector configuration and possible

renaming in the router configuration.

In cc-backend for every cluster you need to create a cluster.json

configuration in the job-archive. There you setup which metrics are shown in the

web-frontend including many additional properties for the metrics. For running

jobs cc-backend will query the internal metric-store for exactly those

metric names and if there is no match there will be an error.

We provide a JSON schema based specification as part of the job meta and metric

schema. This specification recommends a minimal set of metrics and we suggest to

use the metric names provided there. While it is up to you if you want to adhere

to the metric names suggested in the schema, there are two exceptions: mem_bw

(main memory bandwidth) and flops_any (total flop rate with DP flops scaled to

SP flops) are required for the roofline plots to work.

Inconsistent device naming between cc-metric-collector and batch job scheduler adapter

The batch job scheduler adapter (e.g. cc-slurm-adapter) provides a list of

resources that are used by the job. cc-backend will query the internal metric-store

with exactly those resource ids for getting all metrics for a job.

As a consequence if cc-metric-collector uses another systematic the metrics

will not be found.

If you have GPU accelerators cc-slurm-adapter should use the PCI-E device

addresses as ids. The option gpuPciAddrs for the nvidia and

rocm-smi collectors in the collector configuration must be configured.

To validate and debug problems you can use the cc-backend or cc-metric-store

debug endpoint:

curl -H "Authorization: Bearer $JWT" -D - "http://localhost:8080/api/debug"

This will return the current state of cc-metric-store. You can search for a

hostname and scroll there for all topology leaf nodes that are available.

Missing nodes in subcluster node lists

ClusterCockpit supports multiple subclusters as part of a cluster. A subcluster

in this context is a homogeneous hardware partition with a dedicated metric

and device configuration. cc-backend dynamically matches the nodes a job runs

on to a subcluster node list to figure out on which subcluster a job is running.

If nodes are missing in a subcluster node list this fails and the metric list

used may be wrong.

4.3 - Decide on metric list

Introduction

To decide on a sensible and meaningful set of metrics is deciding factor for how useful the monitoring will be. As part of a collaborative project several academic HPC centers came up with a minimal set of metrics including their naming. To use a consistent naming is crucial for establishing what metrics mean and we urge you to adhere to the metric names suggested there. You can find this list as part of the ClusterCockpit job data structure JSON schemas.

ClusterCockpit supports multiple clusters within one instance of cc-backend.

You have to create separate metric lists for each of them. In cc-backend the

metric lists are provided as part of the cluster configuration. Every cluster is

configured as part of the

job archive using one

cluster.json file per cluster.

This how-to describes

in-detail how to create a cluster.json file.

Required Metrics

Flop throughput rate: flops_any

Memory bandwidth: mem_bw

Memory capacity used: mem_used

Requested cpu core utilization: cpu_load

Total fast network bandwidth: net_bw

Total file IO bandwidth: file_bw

Recommended CPU Metrics

Instructions throughput in cycles: ipc

User active CPU core utilization: cpu_user

Double precision flop throughput rate: flops_dp

Single precision flop throughput rate: flops_sp

Average core frequency: clock

CPU power consumption: rapl_power

Recommended GPU Metrics

GPU utilization: acc_used

GPU memory capacity used: acc_mem_used

GPU power consumption: acc_power

Recommended node level metrics

Ethernet read bandwidth: eth_read_bw

Ethernet write bandwidth: eth_write_bw

Fast network read bandwidth: ic_read_bw

Fast network write bandwidth: ic_write_bw

File system metrics

Warning

A file system metric tree is currently not yet supported incc-backendIn the schema a tree of file system metrics is suggested. This allows to provide a similar set of metrics for different file systems used in a cluster. The file system type names suggested are:

- nfs

- lustre

- gpfs

- nvme

- ssd

- hdd

- beegfs

File system read bandwidth: read_bw

File system write bandwidth: write_bw

File system read requests: read_req

File system write requests: write_req

File system inodes used: inodes

File system open and close: accesses

File system file syncs: fsync

File system file creates: create

File system file open: open

File system file close: close

File system file seeks: seek

4.4 - Deployment

Deployment

Why we do not provide a docker container

The ClusterCockpit web backend binary has no external dependencies, everything is included in the binary. The external assets, SQL database and job archive, would also be external in a docker setup. The only advantage of a docker setup would be that the initial configuration is automated. But this only needs to be done one time. We therefore think that setting up docker, securing and maintaining it is not worth the effort.It is recommended to install all ClusterCockpit components in a common

directory, e.g. /opt/monitoring, var/monitoring or var/clustercockpit. In

the following we use /opt/monitoring.

A Systemd service runs on the central monitoring server:

- clustercockpit : Binary cc-backend in

/opt/monitoring/cc-backend. - (Optional with external metric-store) cc-metric-store : Binary cc-metric-store in

/opt/monitoring/cc-metric-store.

ClusterCockpit is deployed as a single binary that embeds all static assets.

We recommend keeping all cc-backend binary versions in a folder archive and

linking the currently active one from the cc-backend root.

This allows for easy roll-back in case something doesn’t work.

Please Note

cc-backend is started with root rights to open the privileged ports (80 and

443). It is recommended to set the configuration options user and group, in

which case cc-backend will drop root permissions once the ports are taken.

You have to take care, that the ownership of the ./var folder and

its contents are set accordingly.

You also can run cc-backend behind a reverse proxy. In this case it can be

started with an unprivileged user and the reverse proxy takes care of TLS

encryption. This also enables to automatically show a maintenance page in case

ClusterCockpit is not reachable.Workflow to deploy new version

This example assumes you are deploying ClusterCockpit for the first time or the DB and job archive versions did not change between versions.

- Stop systemd service:

sudo systemctl stop clustercockpit.service

- Backup the sqlite DB file! This is as simple as to copy it. You can also use a continuous replication service as e.g. litestream.

- Copy new

cc-backendbinary to/opt/monitoring/cc-backend/archive(Tip: Use a date tag likeYYYYMMDD-cc-backend). Here is an example:

cp ~/cc-backend /opt/monitoring/cc-backend/archive/20231124-cc-backend

- Link from

cc-backendroot to current version

ln -s /opt/monitoring/cc-backend/archive/20231124-cc-backend /opt/monitoring/cc-backend/cc-backend

- Start systemd service:

sudo systemctl start clustercockpit.service

- Check if everything is ok:

sudo systemctl status clustercockpit.service

- Check log for errors:

sudo journalctl -u clustercockpit.service

- Check the ClusterCockpit web frontend and your Slurm adapters if anything is broken!

4.5 - Setup of cc-metric-store

Note

The standalone cc-metric-store shares its core storage engine with cc-backend. Its role is for distributed setups and redundancy.Introduction

The cc-metric-store provides an in-memory metric time-series database. It is

configured via a JSON configuration file (config.json). Metrics are received

via messages using the ClusterCockpit ccMessage protocol.

It can receive messages via an HTTP REST API or by subscribing to a NATS subject.

Requesting data is possible via an HTTP REST API.

Configuration

For a complete list of configuration options see

here.

The configuration is organized into four main sections: main, metrics,

nats, and metric-store.

Minimal example of a configuration file:

{

"main": {

"addr": "0.0.0.0:8082",

"jwt-public-key": "kzfYrYy+TzpanWZHJ5qSdMj5uKUWgq74BWhQG6copP0="

},

"metrics": {

"clock": {

"frequency": 60,

"aggregation": "avg"

},

"mem_bw": {

"frequency": 60,

"aggregation": "sum"

},

"flops_any": {

"frequency": 60,

"aggregation": "sum"

},

"flops_dp": {

"frequency": 60,

"aggregation": "sum"

},

"flops_sp": {

"frequency": 60,

"aggregation": "sum"

},

"mem_used": {

"frequency": 60,

"aggregation": null

}

},

"metric-store": {

"retention-in-memory": "48h",

"memory-cap": 100,

"checkpoints": {

"file-format": "wal",

"directory": "./var/checkpoints"

},

"cleanup": {

"mode": "archive",

"directory": "./var/archive"

}

}

}

Main Section

The main section specifies the address and port on which the server should

listen (addr). Optionally, for HTTPS, paths to TLS cert and key files can be

specified via https-cert-file and https-key-file. If using a privileged

port (e.g., 443), you can specify user and group to drop root permissions

after binding. The backend-url option allows connecting to cc-backend for

querying job information. This is required to enable the dynamic memory

retention in cc-metric-store. The REST API uses JWT token based authentication.

The option jwt-public-key provides the ED25519 public key to verify signed

JWT tokens.

Metrics Section

The cc-metric-store will only accept metrics that are specified in its metric

list. The metric names must exactly match! The frequency for the metrics

specifies how incoming values are binned. If multiple values are received in the

same interval older values are overwritten, if no value is received in an

interval there is a gap. cc-metric-store can aggregate metrics across

topological entities, e.g., to compute an aggregate node scope value from core

scope metrics. The aggregation attribute specifies how the aggregate value is

computed. Resource metrics usually require sum, whereas diagnostic metrics

(e.g., clock) require avg. For clock a sum would obviously make no sense.

Metrics that are only available at node scope should set aggregation to null.

Metric-Store Section

The most important configuration option is the retention-in-memory setting. It

specifies for which duration back in time metrics should be provided. This

should be long enough to cover common job durations. The memory-cap option

sets the approximate maximum memory capacity in GB to use. The memory footprint

scales with the number of nodes, the number of native metric scopes (cores,

sockets), the number of metrics, and the memory retention time divided by the

frequency.

The num-workers option controls the number of parallel workers used for

checkpoint and archive I/O. Setting it to 0 (the default) enables automatic

setup, capped at 10.

The cc-metric-store supports checkpoints and cleanup/archiving. Checkpoints

are always performed on shutdown. To not lose data on a crash or other failure,

checkpoints are written at regular intervals configured via

checkpoints.interval (e.g. "12h"). The checkpoints.file-format option

selects the persistence format: "json" (human-readable) or "wal" (binary

Write-Ahead Log, crash-safe, default). See Checkpoint

Formats below. Checkpoints that are not needed anymore can

either be archived or deleted, controlled by the cleanup.mode setting

(archive or delete). The cleanup runs at the interval specified in

cleanup.interval.

Checkpoint Formats

The checkpoints.file-format field controls how in-memory data is persisted to

disk.

"json" — Human-readable JSON snapshots written

periodically. Each snapshot is stored as

<dir>/<cluster>/<host>/<timestamp>.json. Easy to inspect and recover manually,

but larger on disk and slower to write.

"wal" (recommended) — Binary Write-Ahead Log format designed for crash

safety. Two file types are used per host:

current.wal— append-only binary log; every incoming data point is appended immediately. Truncated trailing records from unclean shutdowns are silently skipped on restart.<timestamp>.bin— binary snapshot written at each checkpoint interval, containing the complete metric state. Written atomically via a.tmprename.

On startup the most recent .bin snapshot is loaded, then remaining WAL entries

are replayed on top. The WAL is rotated after each successful snapshot. The

"wal" format will become the only supported option in a future release. If you

are migrating from an older installation using JSON checkpoints, switch to "wal"

after a clean restart.

Parquet Archive

When cleanup.mode is "archive", data that ages out of the in-memory

retention window is written to Apache Parquet

files before being freed, organized as:

<cleanup.directory>/

<cluster>/

<timestamp>.parquet

One Parquet file is produced per cluster per cleanup run, consolidating all hosts. Files are compressed with Zstandard and sorted by cluster, hostname, metric, and timestamp for efficient columnar reads.

Authentication

For authentication signed (but unencrypted) JWT tokens are used. Only Ed25519/EdDSA cryptographic key-pairs are supported. A client has to sign the token with its private key, on the server side it is checked if the configured public key matches the private key with which the token was signed, if the token was altered after signing, and if the token has expired. All other token attributes are ignored.

We provide an article on how to generate JWT. The is also a background info article on JWT usage in ClusterCockpit. Tokens are cached in cc-metric-store to minimize overhead.

NATS

As an alternative to HTTP REST cc-metric-store can also receive metrics via

NATS. You find more infos about NATS in this background article.

To enable NATS in cc-metric-store add the nats section for the connection

and nats-subscriptions in the metric-store section:

{

"nats": {

"address": "nats://localhost:4222",

"username": "user",

"password": "password"

},

"metric-store": {

"nats-subscriptions": [

{

"subscribe-to": "hpc-nats",

"cluster-tag": "fritz"

}

]

}

}

The nats section configures the NATS server connection with address and

credentials. The nats-subscriptions within metric-store define which

subjects to subscribe to and how to tag incoming metrics with cluster

information in case this is not already done.

4.6 - Setup of cc-metric-collector

Introduction

cc-metric-collector is a node agent for measuring, processing and forwarding

node level metrics. It is currently mostly documented via Markdown documents in

its GitHub repository.

The configuration consists of the following parts:

collectors: Metric sources. There is a large number of collectors available. Important and also most demanding to configure is the likwid collector for measuring hardware performance counter metrics.router: Rename, drop and modify metrics.sinks: Configuration where to send the metrics.receivers: Receive metrics. Useful as a proxy to connect different metric sinks. Can be left empty in most cases.

Build and deploy

Since the cc-metric-collector needs to be installed on every compute node and

requires configuration specific to the node hardware it is demanding to install

and configure. The Makefile supports to generate RPM and DEB packages. There is

also a Systemd service file included which you may take as a blueprint.

More information on deployment is available here.

Collectors

You may want to have a look at our collector configuration

which includes configurations for many different systems, Intel and AMD CPUs and

NVIDIA GPUs. The general recommendation is to first decide on the metrics you

need and then figure out which collectors are required. For hardware performance

counter metrics you may want to have a look at likwid-perfctr

performance groups

for inspiration on how to compute the required derived metrics on your

target processor architecture.

Router

The router enables to rename, drop and modify metrics. Top level configuration attributes (can be usually be left at default):

interval_timestamp: Metrics received within same interval get the same identical time stamp if true. Default is true.num_cache_intervals: Number of intervals that are cached in router. Default is 1. Set to 0 to disable router cache.hostname_tag: Set a host name different that what is returned byhostname.max_forward: Number of metrics read at once from a Golang channel. Default is 50. Option has to be larger than 1. Recommendation: Leave at default!

Below you find the operations that are supported by the message processor.

Rename metrics

To rename metric names add a rename_messages section mapping the old metric

name to the new name.

"process_messages" : {

"rename_messages" : {

"load_one" : "cpu_load",

"net_bytes_in_bw" : "net_bytes_in",

"net_bytes_out_bw" : "net_bytes_out",

"net_pkts_in_bw" : "net_pkts_in",

"net_pkts_out_bw" : "net_pkts_out",

"ib_recv_bw" : "ib_recv",

"ib_xmit_bw" : "ib_xmit",

"lustre_read_bytes_diff" : "lustre_read_bytes",

"lustre_read_requests_diff" : "lustre_read_requests",

"lustre_write_bytes_diff" : "lustre_write_bytes",

"lustre_write_requests_diff" : "lustre_write_requests"

}

}

Drop metrics

Sometimes collectors provide a lot of metrics that are not needed. To save

data volume metrics can be dropped. Some collectors also support to exclude

metrics at the collector level using the exclude_metrics option.

Note

If you are using thecc-metric-store all metrics that are not configured in

its metric list are also silently dropped."process_messages" : {

"drop_messages" : [

"load_five",

"load_fifteen",

"proc_run",

"proc_total"

]

}

Normalize unit naming

Enforce a consistent naming of units in metrics. This option should always be set to true which is the default. The metric value is not altered!

"process_messages" : {

"normalize_units": true

}

Change metric unit

The collectors usually do not alter the unit of a metric. To change the unit set

the change_unit_prefix key. The value is automatically scaled correctly,

depending on the old unit prefix.

"process_messages" : {

"change_unit_prefix": {

"name == 'mem_used'": "G",

"name == 'swap_used'": "G",

"name == 'mem_total'": "G",

"name == 'swap_total'": "G",

"name == 'cpufreq'": "M"

}

}

Add tags

To add tags set the add_tags_if configuration attribute. The following

statement unconditionally sets a cluster name tag for all metrics.

Note

You always want to set the cluster tag if you are usingcc-metric-collector

within the ClusterCockpit framework!"process_messages" : {

"add_tags_if": [

{

"key": "cluster",

"value": "alex",

"if": "true"

}

]

}

Sinks

A simple example configuration for two sinks: HTTP cc-metric-store and NATS:

{

"fritzstore": {

"type": "http",

"url": "http://monitoring.nhr.fau.de:8082/api/write?cluster=fritz",

"jwt": "XYZ",

"idle_connection_timeout": "60s"

},

"fritznats": {

"type": "nats",

"host": "monitoring.nhr.fau.de",

"database": "fritz",

"nkey_file": "/etc/cc-metric-collector/nats.nkey",

}

}

All metrics are concurrently send to all configured sinks.

Note

cc-metric-store only accepts timestamps in seconds4.7 - Setup of cc-backend

Introduction

cc-backend is the main hub within the ClusterCockpit framework. Its

configuration consists of the general part in config.json and the cluster

configurations in cluster.json files, that are part of the

job archive.

The job archive is a long-term persistent storage for all job meta and metric data.

The job meta data including job statistics as well as the user data are stored

in a SQL database. Secrets as passwords and tokens are provided as environment

variables. Environment variables can be initialized using a .env file residing

in the same directory as cc-backend. If using an .env file environment

variables that are already set take precedence.

Note (cc-backend before v1.5.0)

For versions before v1.5.0 the.env file was the only option to set

environment variables, and they could not be set by other means!Configuration

cc-backend provides a command line switch to generate an initial template for

all required configuration files apart from the job archive:

./cc-backend -init

This will create the ./var folder, generate initial version of the

config.json and .env files, and initialize a sqlite database file.

config.json

Below is a production configuration enabling the following functionality:

- Use HTTPS only

- Mark jobs as short job if smaller than 5m

- Enable authentication and user syncing via an LDAP directory

- Enable to initiate a user session via an JWT token, e.g. by an IDM portal

- Drop permission after privileged ports are taken

- enable re-sampling of time-series metric data for long jobs

- Enable NATS for job and metric store APIs

- Set metric in memory retention to 48h

- Set upper memory capping for internal metric store to 100GB

- Enable archiving of metric data

- Using S3 as job archive backend. Note: The file based archive in

./var/job-archiveis the default.

Not included below but set by the default settings:

- Use compression for metric data files in job archive

- Allow access to the REST API from all IPs

{

"main": {

"addr": "0.0.0.0:443",

"https-cert-file": "/etc/letsencrypt/live/url/fullchain.pem",

"https-key-file": "/etc/letsencrypt/live/url/privkey.pem",

"user": "clustercockpit",

"group": "clustercockpit",

"short-running-jobs-duration": 300,

"enable-job-taggers": true,

"resampling": {

"minimum-points": 600,

"trigger": 180,

"resolutions": [240, 60]

},

"api-subjects": {

"subject-job-event": "cc.job.event",

"subject-node-state": "cc.node.state"

}

},

"nats": {

"address": "nats://x.x.x.x:4222",

"username": "root",

"password": "root"

},

"auth": {

"jwts": {

"max-age": "2000h"

},

"ldap": {

"url": "ldaps://hpcldap.rrze.uni-erlangen.de",

"user_base": "ou=people,ou=hpc,dc=rz,dc=uni,dc=de",

"search_dn": "cn=hpcmonitoring,ou=roadm,ou=profile,ou=hpc,dc=rz,dc=uni,dc=de",

"user_bind": "uid={username},ou=people,ou=hpc,dc=rrze,dc=uni,dc=de",

"user_filter": "(&(objectclass=posixAccount))",

"sync_interval": "24h"

}

},

"cron": {

"commit-job-worker": "1m",

"duration-worker": "5m",

"footprint-worker": "10m"

},

"archive": {

"kind": "s3",

"endpoint": "http://x.x.x.x",

"bucket": "jobarchive",

"access-key": "xx",

"secret-key": "xx",

"retention": {

"policy": "move",

"age": 365,

"location": "./var/archive"

}

},

"metric-store": {

"memory-cap": 100,

"retention-in-memory": "48h",

"cleanup": {

"mode": "archive",

"directory": "./var/archive"

},

"nats-subscriptions": [

{

"subscribe-to": "hpc-nats",

"cluster-tag": "fritz"

},

{

"subscribe-to": "hpc-nats",

"cluster-tag": "alex"

}

]

},

"ui-file": "ui-config.json"

}

Further reading:

Environment variables

Secrets are provided in terms of environment variables. The only two required

secrets are JWT_PUBLIC_KEY and JWT_PRIVATE_KEY used for signing generated

JWT tokens and validate JWT authentication.

Please refer to the environment reference for details.

5 - How-to Guides

5.1 - Configure retention policies

Overview

Over time, the ClusterCockpit database and job archive can grow significantly, especially in production environments with high job counts. Retention policies help keep your storage at a manageable size by automatically removing or archiving old jobs.

Why use retention policies?

Without retention policies:

- The SQLite database file can grow to tens of gigabytes

- The job archive can reach terabytes in size

- Storage requirements increase indefinitely

- System performance may degrade

A typical multi-cluster setup over 5 years can accumulate:

- 75 GB for the SQLite database

- 1.4 TB for the job archive

Retention policies allow you to balance data retention needs with storage capacity.

Retention policy options

ClusterCockpit supports three retention policies:

None (default)

No automatic cleanup. Jobs are kept indefinitely.

{

"archive": {

"kind": "file",

"path": "./var/job-archive"

}

}

Delete

Permanently removes jobs older than the specified age from both the job archive and the database.

Use when:

- Storage space is limited

- You don’t need long-term job data

- You have external backups or data exports

Configuration example:

{

"archive": {

"kind": "file",

"path": "./var/job-archive",

"retention": {

"policy": "delete",

"age": 365,

"include-db": true

}

}

}

This configuration will:

- Delete jobs older than 365 days

- Remove them from both the job archive and database

- Run automatically based on the cleanup interval

Move

Moves old jobs to a separate location for long-term archival while removing them from the active database.

Use when:

- You need to preserve historical data

- You want to reduce active database size

- You can store archived data on cheaper, slower storage

Configuration example:

{

"archive": {

"kind": "file",

"path": "./var/job-archive",

"retention": {

"policy": "move",

"age": 365,

"location": "/mnt/archive/old-jobs",

"include-db": true

}

}

}

This configuration will:

- Move jobs older than 365 days to

/mnt/archive/old-jobs - Remove them from the active database

- Preserve the data for potential future analysis

Configuration parameters

archive.retention section

| Parameter | Type | Required | Default | Description |

|---|---|---|---|---|

policy | string | Yes | - | Retention policy: none, delete, copy, or move |

age | integer | No | 7 | Age threshold in days. Jobs older than this are affected |

include-db | boolean | No | true | Also remove jobs from the database (not just archive) |

omit-tagged | string | No | none | Skip tagged jobs: none = apply to all jobs, all = skip any tagged job, user = skip jobs with user-created tags (auto-tagger tags are not user tags) |

location | string | For move/copy | - | Target directory for moved/copied jobs |

Complete configuration examples

Example 1: One-year retention with deletion

Suitable for environments with limited storage:

{

"archive": {

"kind": "file",

"path": "./var/job-archive",

"retention": {

"policy": "delete",

"age": 365,

"include-db": true

}

}

}

Example 2: Two-tier archival system

Keep 6 months active, move older data to long-term storage:

{

"archive": {

"kind": "file",

"path": "./var/job-archive",

"retention": {

"policy": "move",

"age": 180,

"location": "/mnt/slow-storage/archive",

"include-db": true

}

}

}

Example 3: S3 backend with retention

Using S3 object storage with one-year retention:

{

"archive": {

"kind": "s3",

"endpoint": "https://s3.example.com",

"bucket": "clustercockpit-jobs",

"access-key": "your-access-key",

"secret-key": "your-secret-key",

"retention": {

"policy": "delete",

"age": 365,

"include-db": true

}

}

}

How retention policies work

- Automatic execution: Retention policies run automatically based on the configured interval

- Age calculation: Jobs are evaluated based on their

startTimefield - Batch processing: All jobs older than the specified age are processed in one operation

- Database cleanup: When

include-db: true, corresponding database entries are removed - Tagged job handling: Controlled by

omit-tagged— useuserto preserve jobs tagged by users while still processing auto-tagged jobs - Archive handling: Based on policy (

deleteremoves,moverelocates)

Best practices

Planning retention periods

Consider these factors when setting the age parameter:

- Accounting requirements: Some organizations require job data for billing/auditing

- Research needs: Longer retention for research clusters where users may need historical data

- Storage capacity: Available disk space and growth rate

- Compliance: Legal or institutional data retention policies

Recommended retention periods:

| Use Case | Suggested Age |

|---|---|

| Development/testing | 30-90 days |

| Production (limited storage) | 180-365 days |

| Production (ample storage) | 365-730 days |

| Research/archival | 730+ days or use move policy |

Storage considerations

For move policy

- Mount the target

locationon slower, cheaper storage (e.g., spinning disks, network storage) - Ensure sufficient space at the target location

- Consider periodic backups of the moved archive

- Document the archive structure for future retrieval

For delete policy

- Create backups first: Always backup your database and job archive before enabling deletion

- Test on a copy: Verify the retention policy works as expected on test data

- Export important data: Consider exporting summary statistics or critical job data before deletion

Monitoring and maintenance

Track archive size: Monitor growth to adjust retention periods

du -sh /var/job-archive du -sh /path/to/database.dbVerify retention execution: Check logs for retention policy runs

grep -i retention /var/log/cc-backend.logRegular backups: Backup before changing retention settings

cp -r /var/job-archive /backup/job-archive-$(date +%Y%m%d) cp /var/clustercockpit.db /backup/clustercockpit-$(date +%Y%m%d).db

Restoring deleted jobs

If using move policy

Jobs moved to the retention location can be restored:

Stop

cc-backendUse the

archive-managertool to import jobs back:cd tools/archive-manager go build ./archive-manager -import \ -src-config '{"kind":"file","path":"/mnt/archive/old-jobs"}' \ -dst-config '{"kind":"file","path":"./var/job-archive"}'Rebuild database from archive:

./cc-backend -init-dbRestart

cc-backend

If using delete policy

Jobs cannot be restored unless you have external backups. This is why backups are critical before enabling deletion.

Related tools

- archive-manager: Manage and validate job archives

- archive-migration: Migrate archives between schema versions

- Database migration: See database migration guide

Troubleshooting

Retention policy not running

Check:

- Verify

archive.retentionis properly configured inconfig.json - Ensure

cc-backendwas restarted after configuration changes - Check logs for errors:

grep -i retention /var/log/cc-backend.log

Database size not decreasing

Possible causes:

include-db: false— Database entries are not being removedSQLite doesn’t automatically reclaim space - run

VACUUM:sqlite3 /var/clustercockpit.db "VACUUM;"

Jobs not being moved to target location

Check:

- Target directory exists and is writable

- Sufficient disk space at target location

- File permissions allow

cc-backendto write tolocation - Path in

locationis absolute, not relative

Performance impact

If retention policy execution causes performance issues:

- Consider running during off-peak hours (feature may require manual execution)

- Reduce the number of old jobs by running retention more frequently with shorter age periods

- Use more powerful hardware for the database operations

See also

5.2 - How to set up hierarchical metric collection

Overview

In large HPC clusters, it’s often impractical or undesirable to have every compute node connect directly to the central database. A hierarchical collection setup allows you to:

- Reduce database connections: Instead of hundreds of nodes connecting directly, use aggregation nodes as intermediaries

- Improve network efficiency: Aggregate metrics at rack or partition level before forwarding

- Add processing layers: Filter, transform, or enrich metrics at intermediate collection points

- Increase resilience: Buffer metrics during temporary database outages

This guide shows how to configure multiple cc-metric-collector instances where compute nodes send metrics to aggregation nodes, which then forward them to the backend database.

Architecture

flowchart TD

subgraph Rack1 ["Rack 1 - Compute Nodes"]

direction LR

node1["Node 1<br/>cc-metric-collector"]

node2["Node 2<br/>cc-metric-collector"]

node3["Node 3<br/>cc-metric-collector"]

end

subgraph Rack2 ["Rack 2 - Compute Nodes"]

direction LR

node4["Node 4<br/>cc-metric-collector"]

node5["Node 5<br/>cc-metric-collector"]

node6["Node 6<br/>cc-metric-collector"]

end

subgraph Aggregator ["Aggregation Node"]

ccrecv["cc-metric-collector<br/>(with receivers)"]

end

subgraph Backend ["Backend Server"]

ccms[("cc-metric-store")]

ccweb["cc-backend<br/>(Web Frontend)"]

end

node1 --> ccrecv

node2 --> ccrecv

node3 --> ccrecv

node4 --> ccrecv

node5 --> ccrecv

node6 --> ccrecv

ccrecv --> ccms

ccms <--> ccwebComponents

- Compute Node Collectors: Run on each compute node, collect local metrics, forward to aggregation node

- Aggregation Node: Receives metrics from multiple compute nodes, optionally processes them, forwards to cc-metric-store

- cc-metric-store: In-memory time-series database for metric storage and retrieval

- cc-backend: Web frontend that queries cc-metric-store and visualizes metrics

Configuration

Step 1: Configure Compute Nodes

Compute nodes collect local metrics and send them to the aggregation node using a network sink (NATS or HTTP).

Using NATS (Recommended)

NATS provides better performance, reliability, and built-in clustering support.

config.json:

{

"sinks-file": "/etc/cc-metric-collector/sinks.json",

"collectors-file": "/etc/cc-metric-collector/collectors.json",

"receivers-file": "/etc/cc-metric-collector/receivers.json",

"router-file": "/etc/cc-metric-collector/router.json",

"main": {

"interval": "10s",

"duration": "1s"

}

}

sinks.json:

{

"nats_aggregator": {

"type": "nats",

"host": "aggregator.example.org",

"port": "4222",

"subject": "metrics.rack1"

}

}

collectors.json (enable metrics you need):

{

"cpustat": {},

"memstat": {},

"diskstat": {},

"netstat": {},

"loadavg": {},

"tempstat": {}

}

router.json (add identifying tags):

{

"interval_timestamp": true,

"process_messages": {

"manipulate_messages": [

{

"add_base_tags": {

"cluster": "mycluster",

"rack": "rack1"

}

}

]

}

}

receivers.json (empty for compute nodes):

{}

Using HTTP

HTTP is simpler but less efficient for high-frequency metrics.

sinks.json (HTTP alternative):

{

"http_aggregator": {

"type": "http",

"host": "aggregator.example.org",

"port": "8080",

"path": "/api/write",

"idle_connection_timeout": "5s",

"timeout": "3s"

}

}

Step 2: Configure Aggregation Node

The aggregation node receives metrics from compute nodes via receivers and forwards them to the backend database.

config.json:

{

"sinks-file": "/etc/cc-metric-collector/sinks.json",

"collectors-file": "/etc/cc-metric-collector/collectors.json",

"receivers-file": "/etc/cc-metric-collector/receivers.json",

"router-file": "/etc/cc-metric-collector/router.json",

"main": {

"interval": "10s",

"duration": "1s"

}

}

receivers.json (receive from compute nodes):

{

"nats_rack1": {

"type": "nats",

"address": "localhost",

"port": "4222",

"subject": "metrics.rack1"

},

"nats_rack2": {

"type": "nats",

"address": "localhost",

"port": "4222",

"subject": "metrics.rack2"

}

}

sinks.json (forward to cc-metric-store):

{

"metricstore": {

"type": "http",

"host": "backend.example.org",

"port": "8082",

"path": "/api/write",

"idle_connection_timeout": "5s",

"timeout": "5s",

"jwt": "eyJ0eXAiOiJKV1QiLCJhbGciOiJFZERTQSJ9.eyJ1c2VyIjoiYWRtaW4iLCJyb2xlcyI6WyJST0xFX0FETUlOIiwiUk9MRV9VU0VSIl19.d-3_3FZTsadPjDbVXKrQr4jNiQV-B_1-uaL_lW8d8gGb-TSAG9KdMg"

}

}

Note: The jwt token must be signed with the private key corresponding to the public key configured in cc-metric-store. See JWT generation guide for details.

collectors.json (optionally collect local metrics):

{

"cpustat": {},

"memstat": {},

"loadavg": {}

}

router.json (optionally process metrics):

{

"interval_timestamp": false,

"num_cache_intervals": 0,

"process_messages": {

"manipulate_messages": [

{

"add_base_tags": {

"datacenter": "dc1"

}

}

]

}

}

Step 3: Set Up cc-metric-store

The backend server needs cc-metric-store to receive and store metrics from the aggregation node.

config.json (/etc/cc-metric-store/config.json):

{

"metrics": {

"cpu_user": {

"frequency": 10,

"aggregation": "avg"

},

"cpu_system": {

"frequency": 10,

"aggregation": "avg"

},

"mem_used": {

"frequency": 10,

"aggregation": null

},

"mem_total": {

"frequency": 10,

"aggregation": null

},

"net_bw": {

"frequency": 10,

"aggregation": "sum"

},

"flops_any": {

"frequency": 10,

"aggregation": "sum"

},

"mem_bw": {

"frequency": 10,

"aggregation": "sum"

}

},

"http-api": {

"address": "0.0.0.0:8082"

},

"jwt-public-key": "kzfYrYy+TzpanWZHJ5qSdMj5uKUWgq74BWhQG6copP0=",

"retention-in-memory": "48h",

"checkpoints": {

"interval": "12h",

"directory": "/var/lib/cc-metric-store/checkpoints",

"restore": "48h"

},

"archive": {

"interval": "24h",

"directory": "/var/lib/cc-metric-store/archive"

}

}

Important configuration notes:

- metrics: Must list ALL metrics you want to store. Only configured metrics are accepted.

- frequency: Must match the collection interval from cc-metric-collector (in seconds)

- aggregation:

"sum"for resource metrics (bandwidth, FLOPS),"avg"for diagnostic metrics (CPU %),nullfor node-only metrics - jwt-public-key: Must correspond to the private key used to sign JWT tokens in the aggregation node sink configuration

- retention-in-memory: How long to keep metrics in memory (should cover typical job durations)

Install cc-metric-store:

# Download binary

wget https://github.com/ClusterCockpit/cc-metric-store/releases/latest/download/cc-metric-store

# Install

sudo mkdir -p /opt/monitoring/cc-metric-store

sudo mv cc-metric-store /opt/monitoring/cc-metric-store/

sudo chmod +x /opt/monitoring/cc-metric-store/cc-metric-store

# Create directories

sudo mkdir -p /var/lib/cc-metric-store/checkpoints

sudo mkdir -p /var/lib/cc-metric-store/archive

sudo mkdir -p /etc/cc-metric-store

Create systemd service (/etc/systemd/system/cc-metric-store.service):

[Unit]

Description=ClusterCockpit Metric Store

After=network.target

[Service]

Type=simple

User=cc-metricstore

Group=cc-metricstore

WorkingDirectory=/opt/monitoring/cc-metric-store

ExecStart=/opt/monitoring/cc-metric-store/cc-metric-store -config /etc/cc-metric-store/config.json

Restart=always

RestartSec=10

[Install]

WantedBy=multi-user.target

Start cc-metric-store:

# Create user

sudo useradd -r -s /bin/false cc-metricstore

sudo chown -R cc-metricstore:cc-metricstore /var/lib/cc-metric-store

# Start service

sudo systemctl daemon-reload

sudo systemctl start cc-metric-store

sudo systemctl enable cc-metric-store

# Check status

sudo systemctl status cc-metric-store

Step 4: Set Up NATS Server

The aggregation node needs a NATS server to receive metrics from compute nodes.

Install NATS:

# Using Docker

docker run -d --name nats -p 4222:4222 nats:latest

# Using package manager (example for Ubuntu/Debian)

curl -L https://github.com/nats-io/nats-server/releases/download/v2.10.5/nats-server-v2.10.5-linux-amd64.zip -o nats-server.zip

unzip nats-server.zip

sudo mv nats-server-v2.10.5-linux-amd64/nats-server /usr/local/bin/

NATS Configuration (/etc/nats/nats-server.conf):

listen: 0.0.0.0:4222

max_payload: 10MB

max_connections: 1000

# Optional: Enable authentication

authorization {

user: collector

password: secure_password

}

# Optional: Enable clustering for HA

cluster {

name: metrics-cluster

listen: 0.0.0.0:6222

}

Start NATS:

# Systemd

sudo systemctl start nats

sudo systemctl enable nats

# Or directly