Scalable

Supports multiple clusters in one web interface. Scales to thousands of nodes and millions of jobs. Supports heterogeneous clusters with and without node sharing.

Slurm integration

Comes with a ready to use Slurm integration. Can be integrated with any batch job scheduler via a REST API.

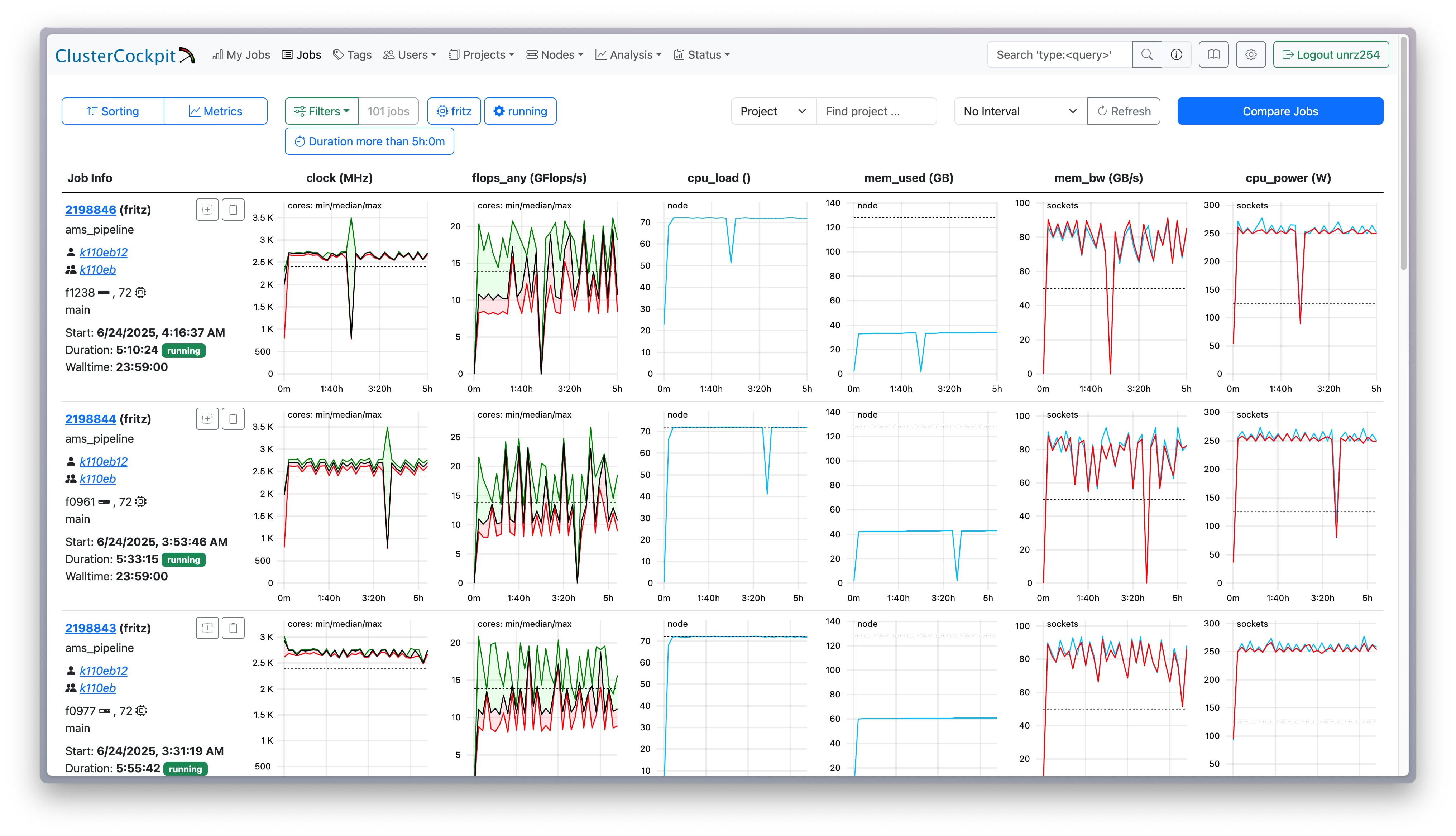

Modern Web UI

Responsive web interface with job-specific, node, user, and system views. Provides metric plots, aggregate statistics, roofline plots, resource table, and access to job scripts. Fully user configurable. A public dashboard is available for unauthenticated external users.

Global access to metric data

Access to configurable set of metrics including hardware performance counter data. Comes with powerful HPC centric node agent, but can also be integrated with other node agent solutions.

Authentication methods

Supports local accounts, LDAP, and KeyCloak OpenID Connect. Can be integrated with existing user portals using JWT based authentication.

User roles

Supports roles for users, project managers, support personnel, and administrators. Users can only see their own jobs. Metrics can be restricted to specific roles for fine-grained access control.

Job sorting and filtering

Powerful sorting of jobs according to all job metadata attributes. Filter for job metadata and aggregate metric data attributes.

Unified search bar

A unified search bar allows to search for job ids, job names, project ids, usernames, and names.

Job tagging

Jobs can be tagged manually or automatically. The built-in job tagger detects known applications (e.g. MATLAB, GROMACS) and flags pathological jobs automatically. Tags are grouped by type and have a configurable scope attribute for visibility control.

Node state monitoring

Live node health tracking with per-metric status and historical state retention. The systems view provides node lists with filtering, paging, and a health status overview per cluster and subcluster.

Flexible job archive

Supports multiple archive backends: JSON files, columnar Parquet files (with zstd compression), SQLite blob storage, and S3-compatible object storage. Cluster- and subcluster-specific retention policies are supported.

Real-time NATS API

Publish and subscribe to real-time job start/stop events and node state changes via NATS messaging. Enables event-driven integrations and live dashboards without polling.

HPC Centers using ClusterCockpit

TU Darmstadt, Ruhr University Bochum, University Duisburg-Essen

TU Darmstadt, Ruhr University Bochum, University Duisburg-Essen